During the week I was at a customer site that is using vSAN 6.2 as foundation for their upcoming virtual desktop infrastructure (Seems like 2016 is really really the year of the VDI). I love vSAN and believe that at the moment it’s a great fit for many dedicated use-cases within the virtualization field.

During some load- & failover tests of the vSAN installation I realized something regarding the IO-queues within the vSAN-stack and to be honest, I am not quiet sure what the risks, mitigations and therefore the correct actions are.

We open a VMware ticket in parallel, but if you have any more in-depth knowledge about this topic, please let me know since this might be interesting to more of people (since the number of vSAN implementations is increasing).

Since the integration of flash/SSD in the performance/cache tier of vSAN the performance is great compared to classical HDD-based solutions.

To ensure a good performance level Duncan Epping and Cormac Hogan talked about the queuing topics within some blog posts or the offical vSAN troubleshooting reference manual (great document btw.).

….

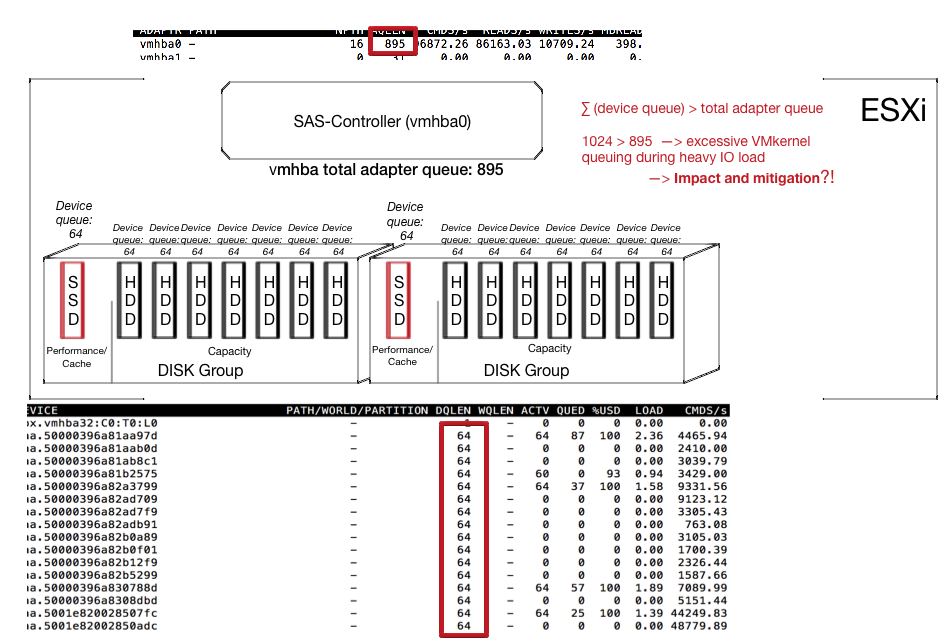

Going through the documents I am still missing the consequences or impact when the adapter queue depth >

∑ (device queue). This might happen in a case when you use a single SAS-Controller and fully equip your ESXi host to reach the vSAN configuration maximum regarding of disk groups, disks, etc.

What I realized during the load-tests was that I had excessive Kernel queuing (ESXTOP: K/QAVG > 5ms) during IO-peaks. I realized in the past that when kernel queuing is too excessive the whole ESXi reacts a little bit ‘sluggish’.

I am not sure if I it would be necessary to take some actions here or what the risk might be if we leave the setup as it is (1 SCSI controller design decision is a non-discussable constraint).

I can imagine that reducing the capacity devices queue depth down to 54 might be suitable, so that the maximum device queues does not reach the adapter limit. As a consequence the queuing would not take place within the ESXi, but within the guest OS of the VMs and therefore we move away stress from the ESXi IO-stack.

But that’s only one option I guess and I would really looking forward to hear from one of the vSAN deep-tech experts out there or your experience with this topic. If any further findings will show up I will update the post.

We are currently pondering the same type of question, what is the appropriate relationship between hba, cache and capacity queue depths. Did you ever get more info from VmWare?

I already received some information, but not enough to put together a blog post. I am trying to find someone to chat with during VMworld. Anyway it shouldn’t be at all an issue according to the current information-set I got. So enjoy vSAN ;-)

I hope to enhance this post within the next weeks.

Very interesting question! We plan to use hp dl380 g9 24 disk sas + 3 hba (1011 queue depth). Very interested in the performance of this configuration. Queue depth one sas disk = 256. Wondering whether to reduce the value of the queue depth is necessary for all drives? It is waiting for your post update!

Sorry for the delay. It is not recommended to change anything right here regarding the queue depth, etc. I am still hunting for answers so I can understand the whole I/O queue stack (and be able to reproduce it). Unfortunately my projects & jobs are currently holding me back to make progress here.