PowerCLI (based on Powershell) is one of those things that really boosted my productivity in the VMware field. It is an easy to use product that allows you to do incredible things within a vSphere… wait… VMware environment (it also includes cmdlets for vCloud, vRealize, View …. ). Do bulk operations? easy-as-that… Gather some data for reports? not-a-big-problem-at all… Delivering hot coffee to your desk? Okok… not that incredible things (yet)…

Nowadays I still meet so many people during my daily business that aren’t really into it or are kind of afraid of using Powershell/PowerCLI. Let me try to give you some arguments why learning PowerCLI is a great idea even though it’s principles are kind of / come close to ‘programming’ *WE-HATE-PROGRAMMING-ANGST*.

1.) Become a better admin / consultant (and even architect)

As an admin (especially from the windows world) we as administrators spent so much time configuring stuff multiple times. Doing it in a graphical user mouse-/pointer-based interface it might not just take a long time. To enable SSH on 5 ESXi hosts it would take you including the login to the webclient incredible many clicks (In the 5 hosts scenario you probably need 35 clicks, 0,3 meters of scrolling, 142 seconds in the web client).

How do we solve it with PowerCLI?

Get-VMHost | Get-VMhostService | Where-Object {$_.Key -eq “TSM-SSH”} | Start-VMHostService

Much easier, especially if you archive your helper scripts somewhere so you can re-use them when ever there is a need (I know my Github repository is small, but it’s growing)

2.) Easy to learn – hard to master

The best way to describe PowerCLI / Powershell is by comparing it with Mario Kart. One of the best (and funny) video games the world has ever seen. Why is it so? Because it is so easy to get started with that even beginners might win with a little bit of luck. But anyway, if you want to start to reach maximum goals you will realise that there is so much you can do better.

The same is applicable with PowerCLI. Instead of getting a lame ‘hello world’ output in the beginning you can really create something you are familiar with (VMs, gathering useful data, etc.).

BUT remember… Mario Kart is a game… if you lose, you lose and start all over again… In the real life you would not want to go the racetrack as a beginner and start throwing bananas at other cars (at least I hope you wouldn’t do that). Learn and increase your PowerCLI skills in test-environments and only go with it into production if you understand the impact/risk and functionality of the scripts you are using.

One-liners like Get-VM | Stop-VM can create huge service impacts within your environment with minimum effort.

3.) Getting familiar with programmatical and automation approaches

The datacenter is getting more and more complex. Every component is getting abstracted multiple times ending up in a api-programmable resource. This creates new challenges (complexity) but gives us new opportunities in forms of service delivery speed / automation. In the future it will become more and more important that proccesses/workflows needs to be created (and maintained). And no matter how you are going to implement it (Orchestrator, Powershell, etc.) – the logical way of achieving it is always the same and always related to approaches coming from the programming field.

Learning PowerCLI means getting familiar with those approaches. You need to know why we need if-/else-/switch statements. Why do we need loops. What are datatypes. What is the reason that object-oriented thinking is so valuable.

Powershell (and therefore PowerCLI) abstracts away much of the mentioned complexity, but you will still touch them from time to time. Therefore if you are curious you will get a much deeper insight every time you confront yourself with those topics.

Once you are familiar with how to logically automate specific workflows (because you created your own automation mechanisms), you don’t really care which solution the automation does.

4.) The PowerCLI community is one of the best I have seen in the tech-field

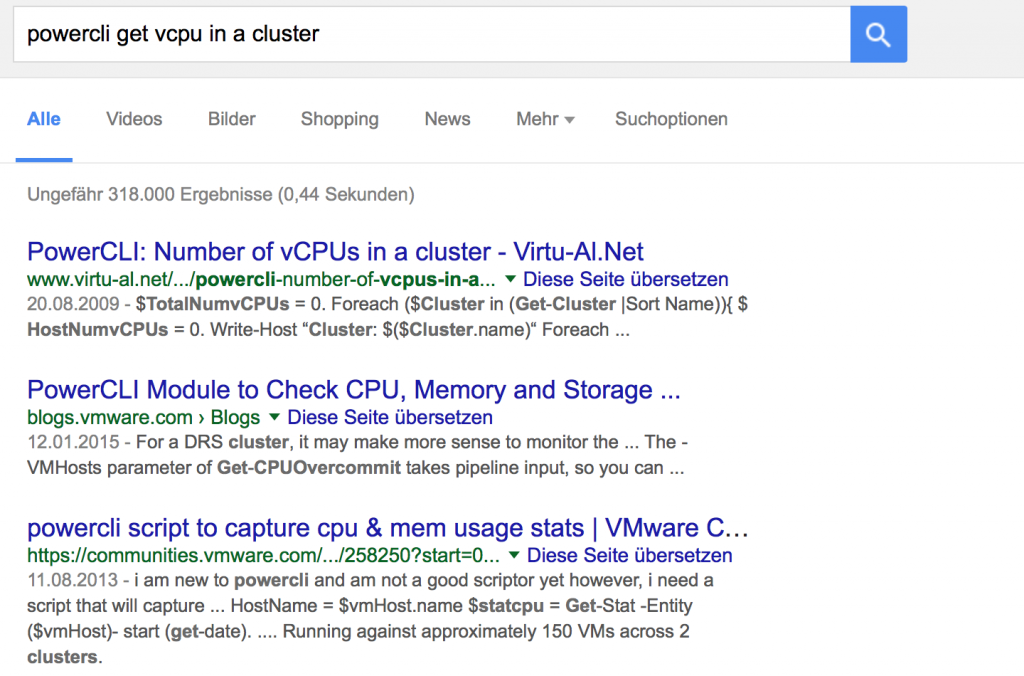

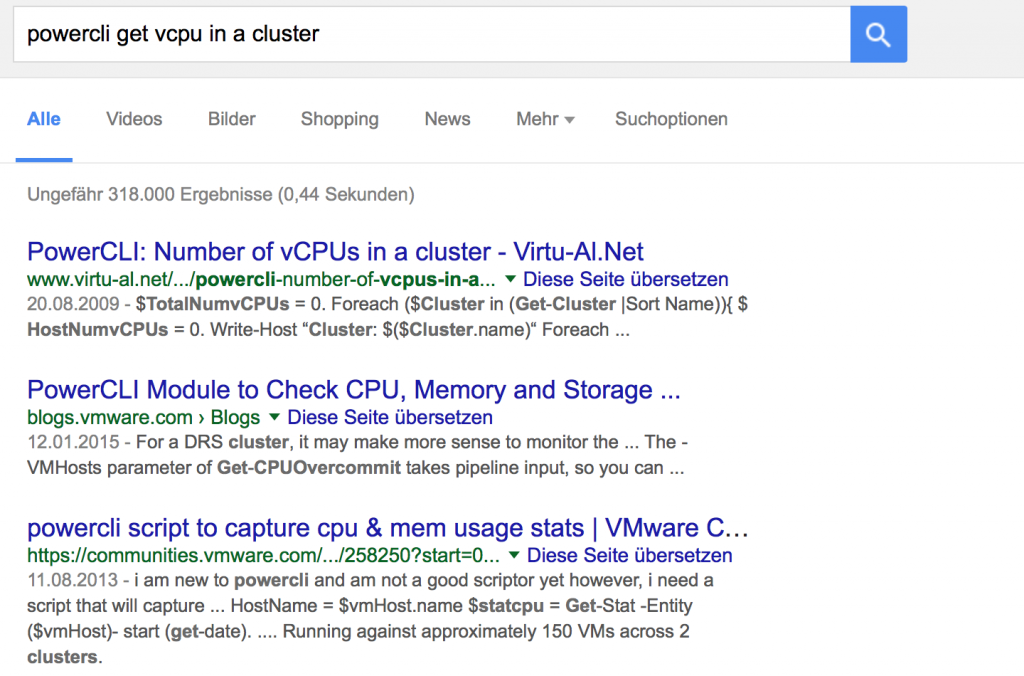

There is nothing more to say. For almost every problem someone has already created a solution and has posted it in a blog / board. So what to do? Google for PowerCLI <What you want to achieve>, e.g. PowerCLI get vCPU in a cluster

in 95% of the cases you will find a suitable solution (maybe you need to edit the final script a little bit) on the first page of google.

And if you don’t find a solution. Just post them to the official VMware PowerCLI community

p.s. In 4 out of 5 posts Luc Dekens will help you within 72 seconds after you have posted a question there. This guy brings so much value to the PowerCLI community. Thanks for that.

5.) For nearly every problem a script or a snippet is already available

<If you have just read passage 4) too quickly…. you should read it in more detail now ;-) >

6.) PowerCLI forces you to work more accurate

Yes PowerCLI is pretty easy, but it is also a text-based user interaction. And it’s pretty typical if you configure something via text that you need to know for 100% what you are doing (in GUIs you can more easily have a trial- and error click experience).

Planning in advance what are you going to do & having a powerful tool at your hand that can shutdown your whole environment within seconds will force you to work more accurate and think more ahead in your daily doing. A skill that is IMO very very important.

Always remember what the uncle of a good’s friend’s friend told some guy:

“With great Power(CLI) comes great responsibility”